The growth of artificial intelligence (AI) and machine learning (ML) has driven demand for specialized hardware at an unprecedented rate. One of the most critical components enabling next‑generation AI performance is High‑Bandwidth Memory (HBM). As AI models grow larger and more complex, the parallel data throughput that HBM provides is critical — particularly in inference and training workloads where conventional DRAM lags behind.

This article is about where to buy to HBM memory 2026 for engineers, the sourcing challenges for this technology and how to find dependable providers for mission‑critical ai applications.

The Rising Demand for HBM in AI/ML Workloads

AI models today — from large language models (LLMs) to advanced vision networks — require massive amounts of data movement between memory and processing units. HBM, with its vertically stacked architecture and wide I/O, delivers much higher bandwidth per watt than conventional memory types. This makes it ideal for:

- AI training clusters where multiple GPUs/TPUs communicate high volumes of data

- Edge AI systems that need low‑latency, high‑throughput memory

- Reconfigurable computing platforms (e.g., FPGAs) used in real‑time inference

Industry analysts forecast that demand for HBM will escalate as AI continues to penetrate sectors such as autonomous systems, healthcare diagnostics, and real‑time analytics. As a result, engineers need clear strategies for where to buy HBM memory 2026, particularly when building bespoke or high‑performance systems.

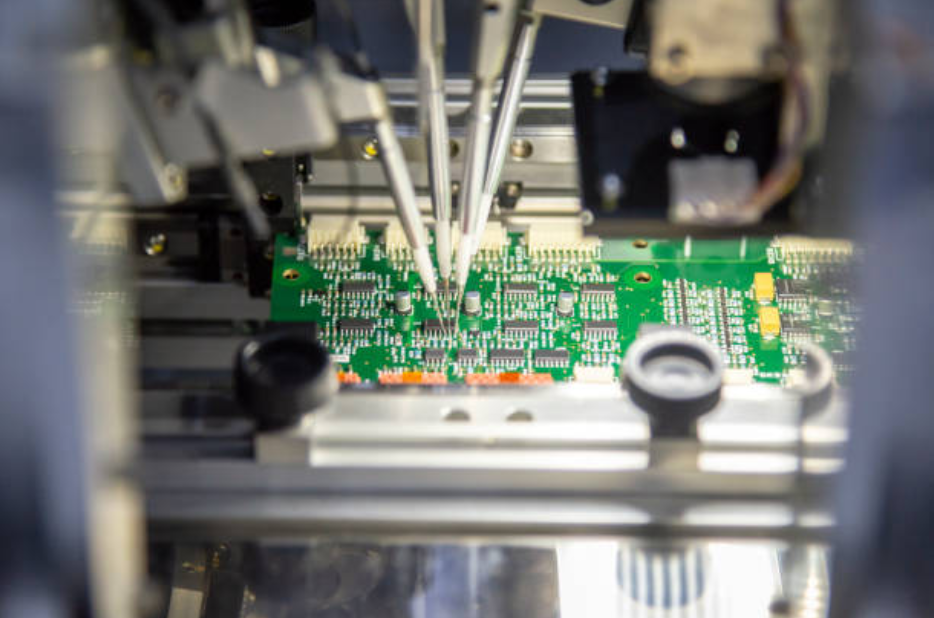

Getting hold of HBM isn’t just a matter of buying regular memory modules. This is because of manufacturing constraints, special packaging needs and close chips with advanced processors. With that context, let’s take a look at the supply situation.

Key Suppliers and Where to Buy HBM Memory 2026

In 2026, several manufacturers and distributors dominate the HBM supply chain, each with unique strengths. Engineers looking where to buy HBM memory 2026 should understand these suppliers and their offerings.

Major HBM Manufacturers

- Samsung Electronics – A top supplier of HBM2e and HBM3 memory solutions, Samsung produces high BW memory packaged for leading accelerator platforms.

- SK hynix – Known for delivering HBM3 products with strong performance metrics and power efficiency.

- Micron Technology – Focuses on custom HBM configurations aimed at enterprise and AI accelerator markets.

These manufacturers supply memory to board and system vendors, but engineers can also engage them directly through authorized channels or design partnerships when developing custom hardware.

Authorized Distributors

Distributors such as Avnet, Arrow Electronics, and Future Electronics partner with leading manufacturers to make components available to design engineers and OEMs. These avenues can be extremely helpful when attempting to obtain limited-run parts or custom configurations. Many of these distributors also support inventory reservation and allocation agreements – those are useful instruments if your question is where to buy HBM memory 2026 in a supply constrained environment.

Secondary Markets

Because of the upsurge in demand, some companies resort to secondary markets for high-performance parts. Though that can be a good option when supplies are scarce, engineers have to be careful — making sure the parts are real and that they meet specifications. When considering secondary sources for sourcing your HBM memory 2026, ensure that you verify their traceability and testing methodologies.

Sourcing Challenges in 2026

Despite the growing need, sourcing HBM in 2026 still presents challenges. These include:

- Limited production capacity: HBM fabrication and packaging rely on advanced manufacturing nodes and specialized facilities. As AI demand grows faster than supply, allocation pressures can occur.

- Long lead times: Custom configurations integrated into bespoke systems may have lead times stretching months.

- Pricing volatility: High‑performance memory markets can experience price swings due to demand cycles or supply chain disruptions.

Due to these issues, engineers need to plan in advance. This means predicting demand, procuring the early allocations, and spreading out on suppliers. Knowing where to buy HBM memory 2026 is as much a supplier play as a technical spec.

Best Practices for Finding Reliable HBM Suppliers

Here are practical steps to help engineers source high‑bandwidth memory:

1. Partner with Authorized Distributors

Working with well‑established electronics distributors increases access to inventory and support services. These partners often offer:

- Stock visibility dashboards

- Priority allocation for key customers

- Warranty and return support

These services are crucial when searching for where to buy HBM memory 2026 with minimal risk.

2. Leverage Component Sourcing Services

For teams without large purchasing resources, third-party component sourcing services can simplify the search for obscure parts. These companies are experts at finding genuine inventory via global channels. Check out the sourcing page and find out more about how component sourcing services can help you― one-stop resource that covers best practice, buyer vetting and standard delivery timelines.

- Engage Directly with Manufacturers

If you’re developing highly customized AI hardware, direct engagement with manufacturers like Samsung, SK hynix, or Micron can yield design‑in support and allocation commitments. This route provides insider access to production windows — an advantage when competitive demand is high.

4. Maintain Inventory Forecasts

Work with your supply chain and design teams to project memory demand as early as possible. Long‑term forecasts increase your chances of securing HBM when you’re determining where to buy HBM memory 2026.

Conclusion

With proliferating AI and ML workloads in all industries, high-bandwidth memory is not just a niche component – it’s essential for future-ready hardware. Engineers working on high-performance, data-intensive designs need to know where to buy HBM memory 2026, the market situation, and how to cope with supply challenges. By collaborating with authorized distributors, exploring components sourcing services, contacting vendors directly, and planning ahead, you can get the high-bandwidth memory needed to fuel the AI innovations of tomorrow.